Abstract

I will give a self-contained introduction to the theory of the neural network function class and its application to image classification and numerical solution of partial differential equations. The following topics will be covered:

* Definition of the neural network function class as a generalization of classical finite element functions

* Deep ReLU neural networks versus the classic piecewise linear finite element functions.

* Classical approximation theory of neural network functions

* New optimal approximate theory of stable neural network functions

* Classic machine learning methods: logistic regression and support vector machine

* Deep learning: convolutional neural networks (CNN) for image classification

* MgNet: a special CNN obtained from a minor modification of the classical multigrid method

* Application and error analysis of neural network for numerical solutions of partial differential equations (PDEs)

* Numerical quadrature and Rademacher complexity analysis

* Old and new training algorithms for machine learning and numerical PDEs

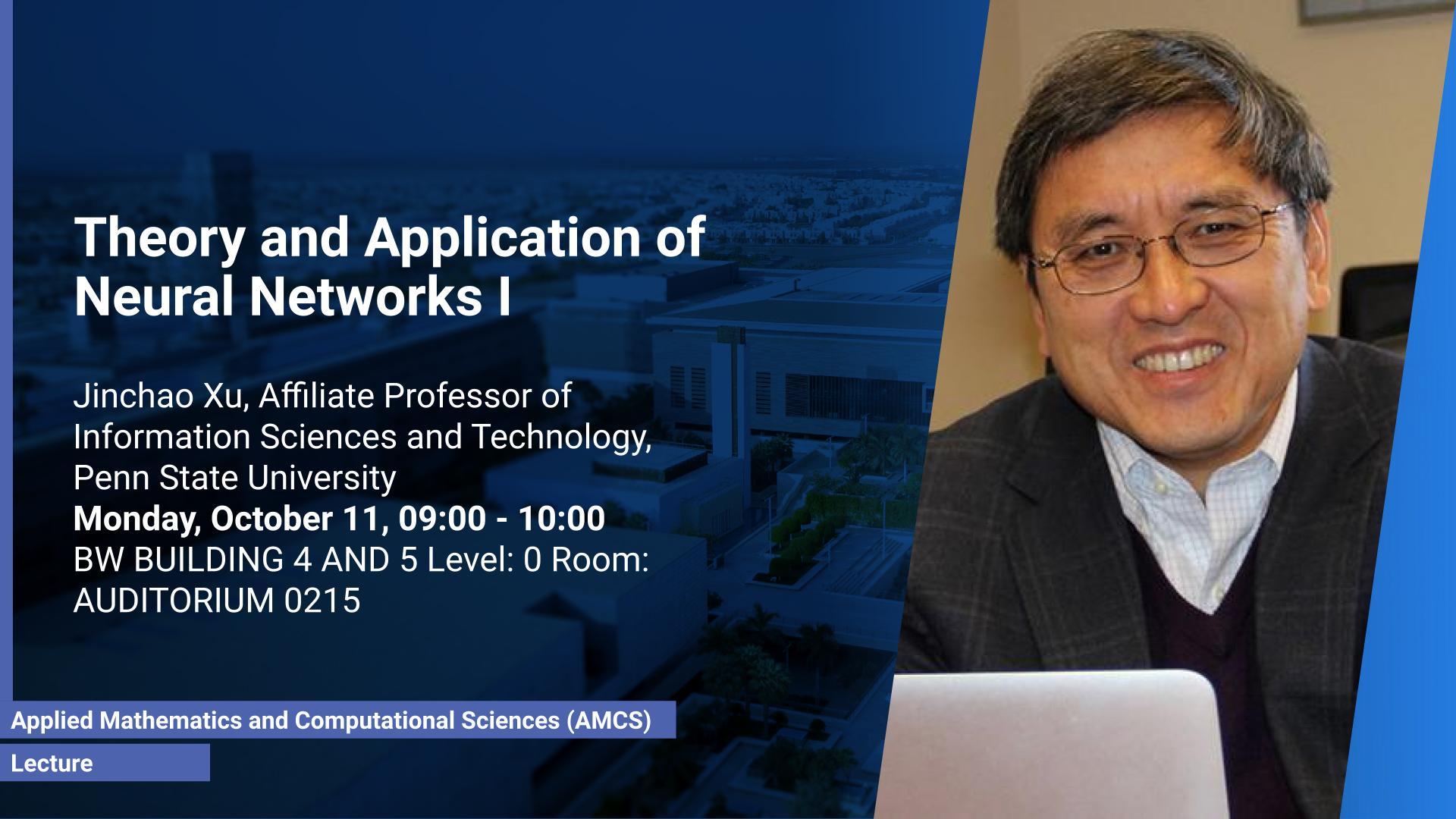

Brief Biography

Jinchao Xu is the Verne M. Willaman Professor of Mathematics and Director of the Center for Computational Mathematics and Applications at the Pennsylvania State University. Xu’s research is on numerical methods for partial differential equations that arise from modeling scientific and engineering problems. He has made major contributions to the theoretical analysis, algorithmic developments, and practical application, of multilevel methods. He is perhaps best known for the Bramble-Pasciak-Xu (BPX) preconditioner and the Hiptmair-Xu preconditioner. Xu earned his doctoral degree at Cornell University in 1989. He is a Fellow of the Society for Industrial and Applied Mathematics (SIAM), the American Mathematical Society (AMS), and the American Association for the Advancement of Science (AAAS).