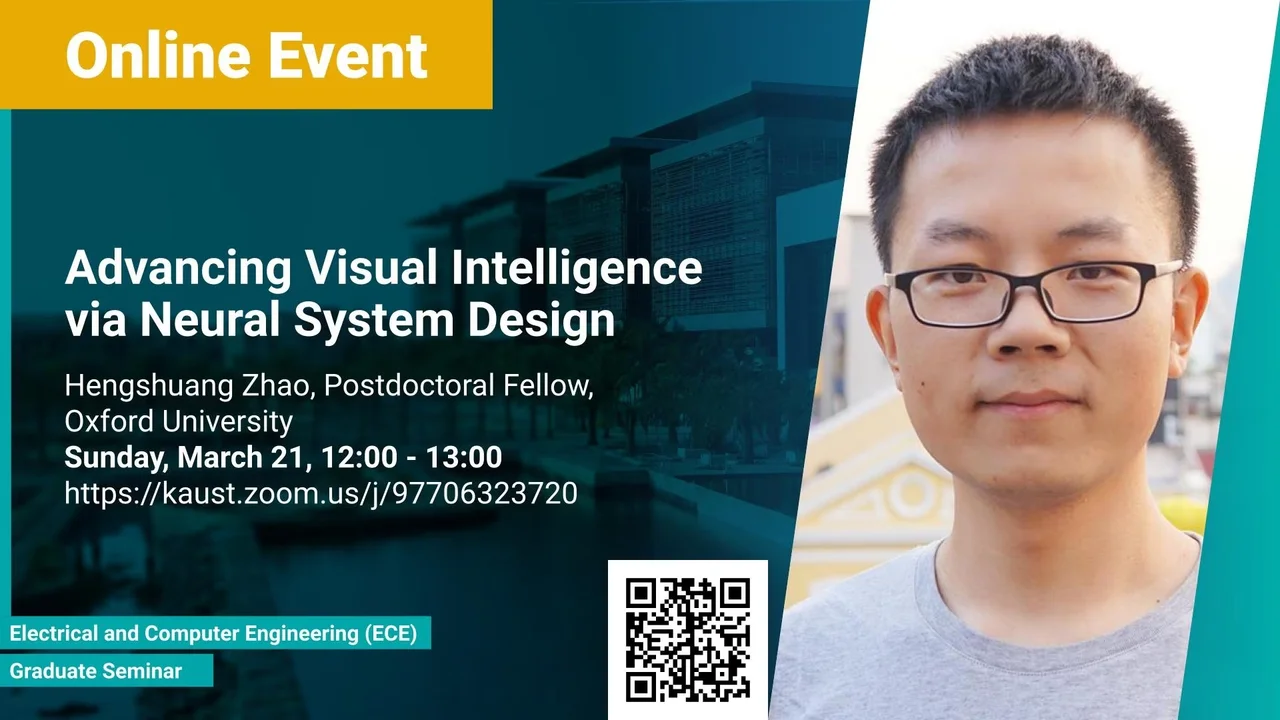

Advancing Visual Intelligence via Neural System Design

Building intelligent visual systems is essential for the next generation of artificial intelligence systems. It is a fundamental tool for many disciplines and beneficial to various potential applications such as autonomous driving, robotics, surveillance, augmented reality, to name a few. An accurate and efficient intelligent visual system has a deep understanding of the scene, objects, and humans. It can automatically understand the surrounding scenes. In general, 2D images and 3D point clouds are the two most common data representations in our daily life. Designing powerful image understanding and point cloud processing systems are two pillars of visual intelligence, enabling the artificial intelligence systems to understand and interact with the current status of the environment automatically. In this talk, I will first present our efforts in designing modern neural systems for 2D image understanding, including high-accuracy and high-efficiency semantic parsing structures, and unified panoptic parsing architecture. Then, we go one step further to design neural systems for processing complex 3D scenes, including semantic-level and instance-level understanding. Further, we show our latest works for unified 2D-3D reasoning frameworks, which are fully based on self-attention mechanisms. In the end, the challenges, up-to-date progress, and promising future directions for building advanced intelligent visual systems will be discussed.

Overview

Abstract

Building intelligent visual systems is essential for the next generation of artificial intelligence systems. It is a fundamental tool for many disciplines and beneficial to various potential applications such as autonomous driving, robotics, surveillance, augmented reality, to name a few. An accurate and efficient intelligent visual system has a deep understanding of the scene, objects, and humans. It can automatically understand the surrounding scenes. In general, 2D images and 3D point clouds are the two most common data representations in our daily life. Designing powerful image understanding and point cloud processing systems are two pillars of visual intelligence, enabling the artificial intelligence systems to understand and interact with the current status of the environment automatically. In this talk, I will first present our efforts in designing modern neural systems for 2D image understanding, including high-accuracy and high-efficiency semantic parsing structures, and unified panoptic parsing architecture. Then, we go one step further to design neural systems for processing complex 3D scenes, including semantic-level and instance-level understanding. Further, we show our latest works for unified 2D-3D reasoning frameworks, which are fully based on self-attention mechanisms. In the end, the challenges, up-to-date progress, and promising future directions for building advanced intelligent visual systems will be discussed.

Brief Biography

Dr. Hengshuang Zhao is a postdoctoral researcher at the University of Oxford. Before that, he obtained his Ph.D. degree from the Chinese University of Hong Kong. His general research interests cover the broad area of computer vision, machine learning and artificial intelligence, with special emphasis on building intelligent visual systems. He and his team won several champions in competitive international challenges like ImageNet Scene Parsing Challenge. He is recognized as outstanding/top reviewers in ICCV’19 and NeurIPS’19. He receives the rising star award at the world artificial intelligence conference 2020. Some of his research projects are supported by Microsoft, Adobe, Uber, Intel, and Apple. His works have been cited for about 5,000+ times, with 5,000+ GitHub credits and 80,000+ YouTube views.