Provable and Measurable Machine Unlearning in Modern Learning Systems

This dissertation examines the foundations of machine unlearning under realistic learning system constraints and proposes both theoretically grounded unlearning algorithms and principled evaluation frameworks for modern learning systems.

Overview

Machine unlearning aims to remove the influence of specific data from trained machine learning models, motivated by privacy regulations, security concerns, and the need for trustworthy AI systems. While retraining from scratch provides a conceptual baseline, it is often computationally infeasible in large-scale and distributed settings.

This dissertation studies two core questions: how to design unlearning mechanisms with formal guarantees under realistic system constraints, and how to measure unlearning completeness when strict guarantees are relaxed. It develops provable unlearning frameworks for inductive graph learning and federated learning, and introduces a principled evaluation methodology for assessing sample-level unlearning completeness in deep models, including large language models. These results contribute to improving the rigor and auditability of machine unlearning in modern learning systems.

Presenters

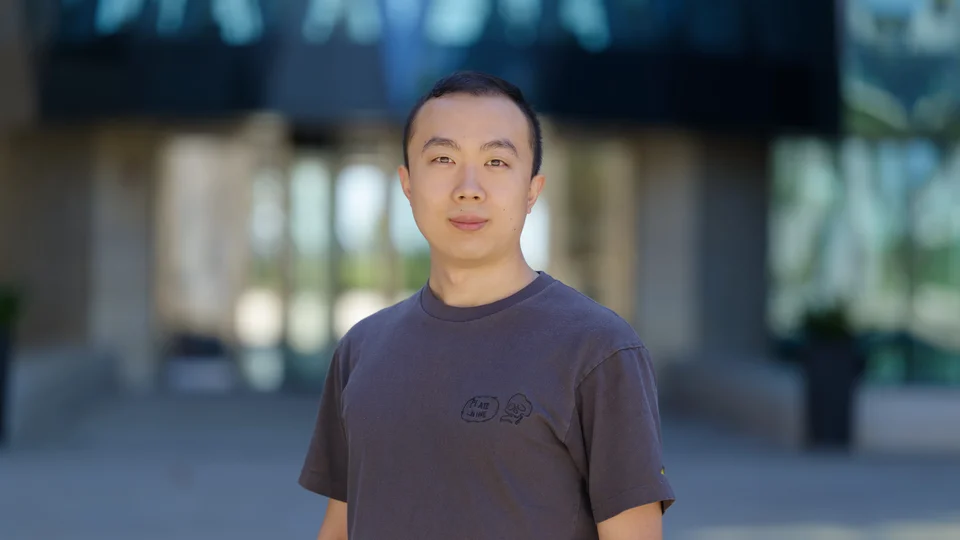

Brief Biography

Cheng-Long Wang is a PhD candidate in Computer Science (CS) at King Abdullah University of Science and Technology (KAUST), advised by Prof. Di Wang. His research focuses on machine unlearning, privacy-preserving machine learning, and trustworthy AI systems. His work explores both theoretical foundations and system-level design for provable and measurable machine unlearning in modern learning frameworks, including graph learning, federated learning, and large-scale deep models.