Abstract

It is widely acknowledged how the relentless surge of Volume, Velocity and Variety of data, as well as the simultaneous increase of computational resources have stimulated the development of data-driven methods with unprecedented flexibility and predictive power. However, not every environmental study entails a large data set: many applications ranging from astronomy or paleo-climatology have a high associated sampling cost and are instead constrained by physics-informed partial differential equations. Throughout the past few years, a new and powerful paradigm has emerged in the machine learning literature, merging data-driven and physics-informed problems, hence providing a unified framework for a whole spectrum of problems ranging from data-rich/context-poor to data-poor/context-rich. In this talk, I will present this new framework and discuss some of the most recent efforts to reformulate it as a stochastic model-based approach, thereby allowing calibrated uncertainty quantification.

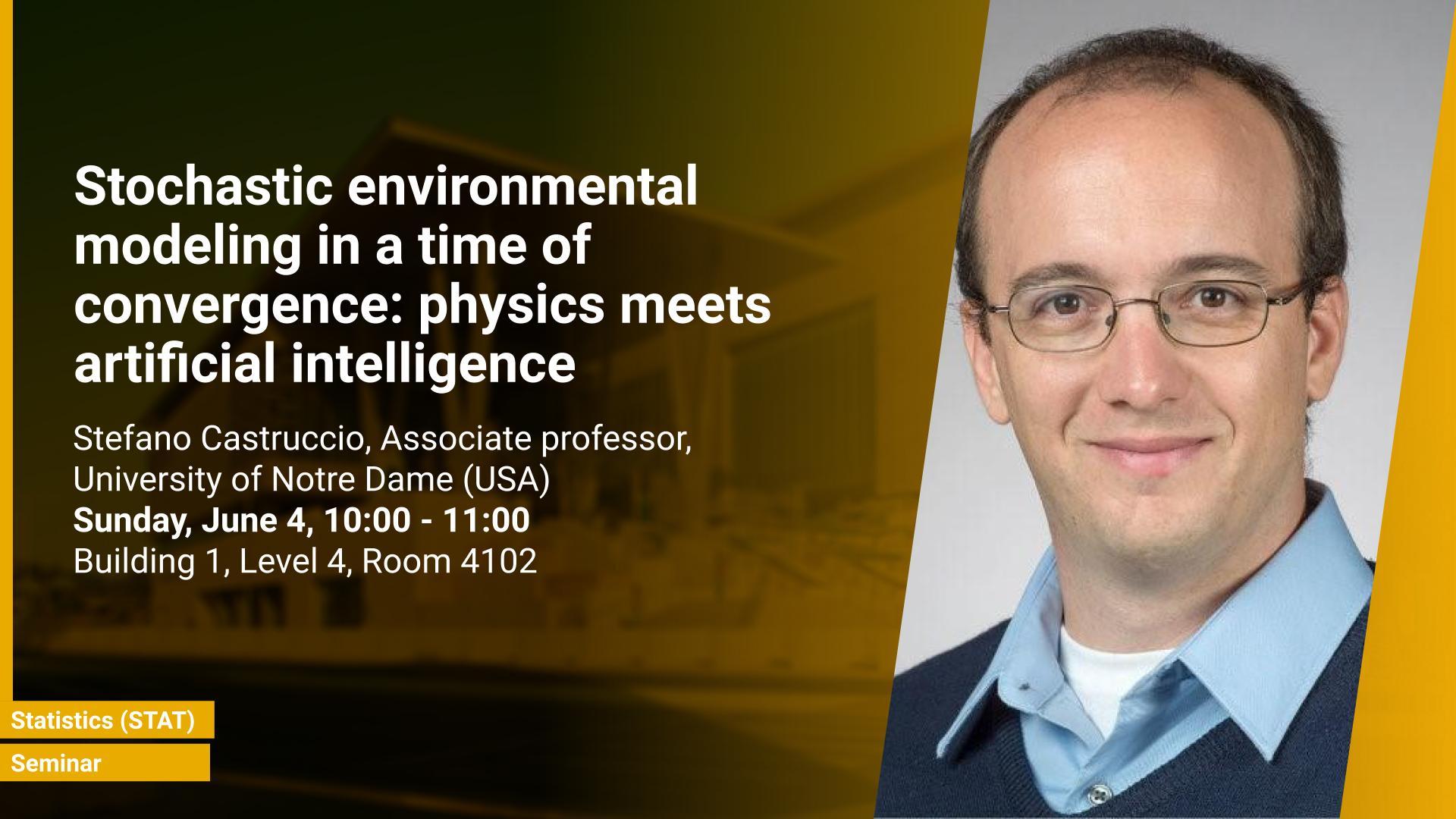

Brief Biography

Prof. Castruccio obtained his PhD at the University of Chicago (USA), and has worked in Saudi Arabia and the United Kingdom before his current appointment as Associate Professor at the University of Notre Dame (USA). His main area of research focuses on the development of spatio-temporal statistical models for environmental applications, with applications spanning from assessment of renewable energy resources to assessing mortality from air pollution. His focus is mostly on climate and weather modes, and the use of statistical and machine learning models that can act as a stochastic approximation to provide a computationally affordable assessment of parameter sensitivity analysis. His methodological research is focused on the development of non-stationary Gaussian processes in Euclidean and spherical domains, and in scalable multi-resolution inference for high-dimensional processes in space and time.