Research

At the Computer, Electrical and Mathematical Sciences and Engineering (CEMSE) Division at KAUST, we embrace a collaborative and interdisciplinary approach to research, leveraging expertise and cutting-edge resources across disciplines to tackle complex national and global challenges.

This collaborative approach has fueled impactful research projects—both fundamental and goal-oriented—in diverse areas, including telecommunications, extreme computing, artificial intelligence, and the analysis of environmental, physiological, and social data.

Building on our strong research foundation and aligning with KAUST's new strategic focus on national Research, Development, and Innovation (RDI) priorities, we are refining our research efforts to contribute to the RDI's four key pillars:

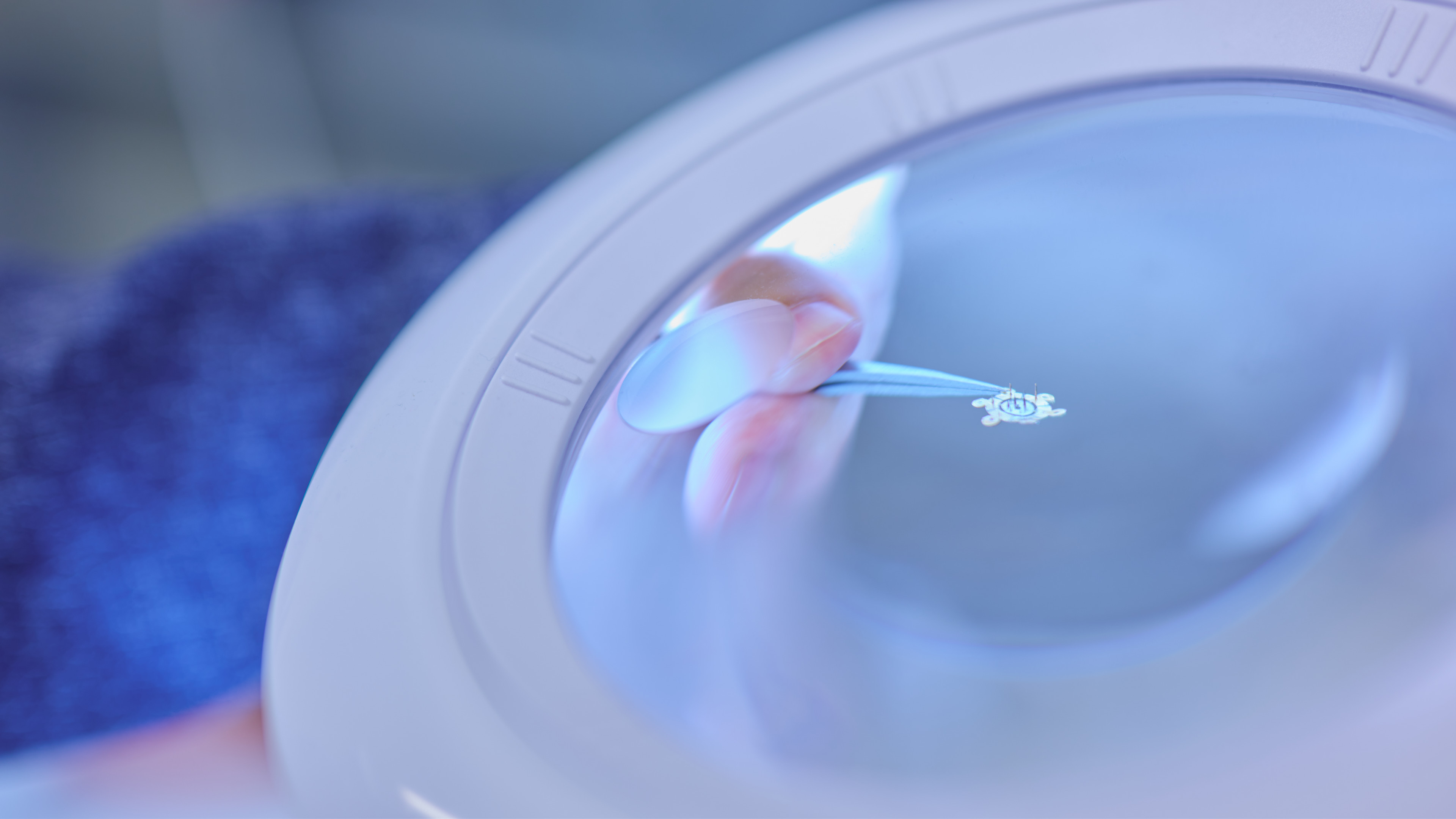

Health and Wellness

Sustainable Environment and Essential Needs

Energy and Industrial Leadership

Economies of the Future

Research Areas

Explore the research areas fueled by a community of talents dedicated to tackling national and global challenges and fostering a sustainable future.

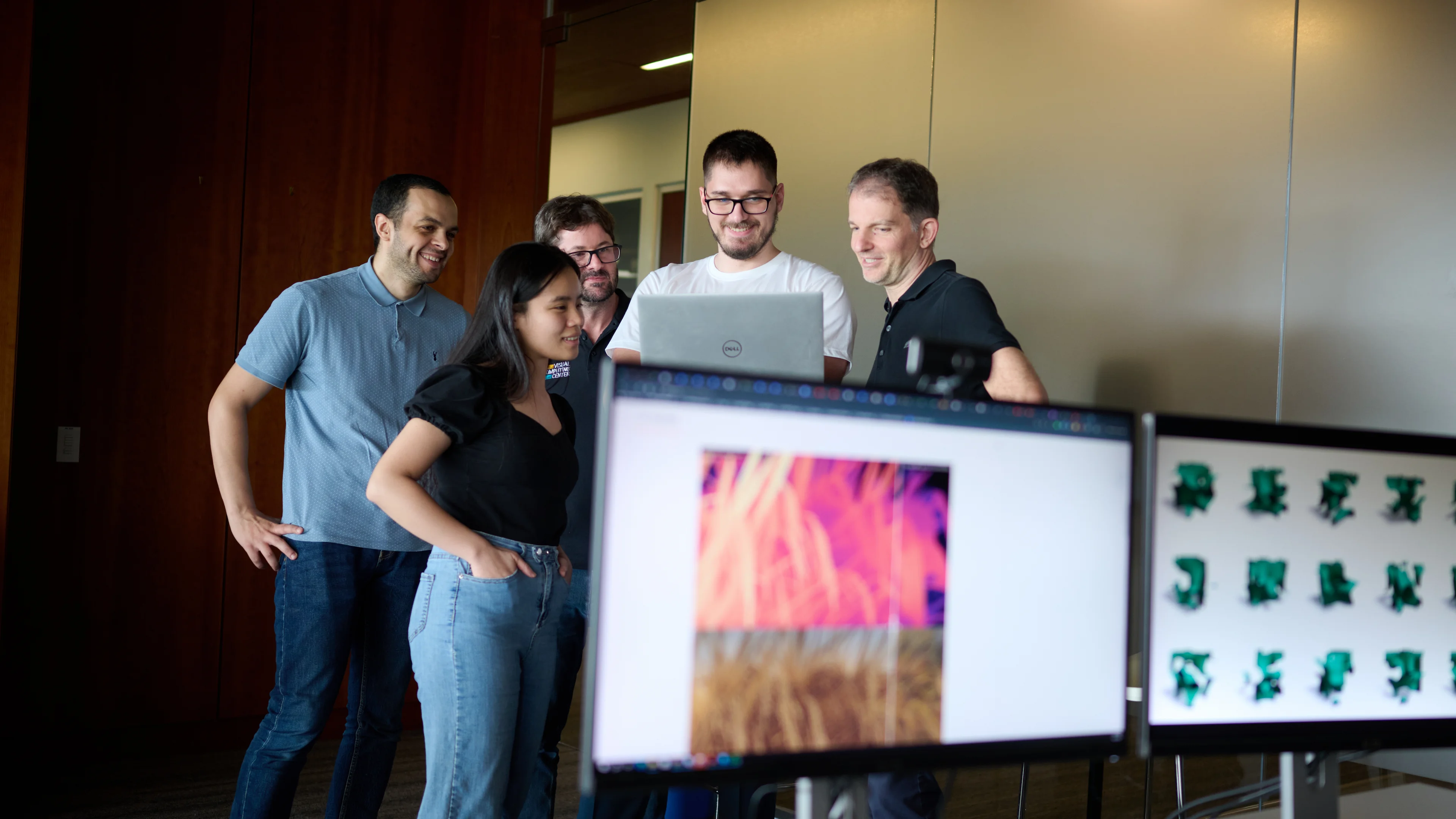

Faculty and Research Groups

CEMSE's faculty and research groups represent a wide range of disciplines and interests, reflecting a rich diversity of expertise. From artificial intelligence and robotics to data science, smart health and beyond, our research fosters countless opportunities for discovery, innovation and impact.

Shaheen III Supercomputer

KAUST is home to Shaheen III, the most powerful supercomputer in the Middle East, ranking 18th on the 2025 TOP500 list.

Powered by 2,800 NVIDIA GH200 Grace Hopper Superchips, Shaheen III provides our researchers access to the scale and performance required for advanced research across core scientific and engineering domains.

Research Facilities

KAUST empowers researchers with state-of-the-art facilities, some unique to the University, ensuring access to the best tools for groundbreaking discoveries. With sustained support from KAUST, our researchers pursue ambitious long-term projects, fostering both curiosity-driven exploration and impactful goal-oriented collaborations across disciplines.

KAUST Core Labs exemplify this commitment, advancing scientific discovery in Saudi Arabia and beyond.

News

Partner With Our Talents

The KAUST Industry Collaboration Program (KICP) was established to promote innovation between KAUST’s world-class research community and the broader world. The program acts as a destination point for connection and collaborative research.

Becoming a member of the KICP means you have unrivaled access to a research community that can impact and elevate your organization’s future.

Numerous leading global and In-kingdom industry partners are engaged with the program, including Boeing, IBM, Saudi Aramco, Dow, SABIC, and more. The KCIP provides access to the KAUST's research and technology, faculty and student talent, facilities and training.