Theme Description

This research theme aims to improve machine learning techniques to analyze and synthesize spatial structures. The grand challenge of this theme is to develop the next generation deep learning models for processing 3D meshes, point clouds, videos, and images. Our work includes both fundamental research on machine learning as well as applications to visual computing, urban planning, physics-based modeling, and geometry.

The Machine Learning for Spatial Structures theme encompasses the following problems:

-

Architectural and urban design: this problem is about the development of tools for architects, urban planners, designers, civil engineers, or administrators to explore possible solutions to urban design problems.

-

Physics-Based Modeling: this problem includes the development of machine learning techniques to replace and complement existing simulation techniques and the generation of training data for machine learning techniques.

-

Analyzing Geo-Spatial and Remote Sensing Data: this problem includes the analysis of aerial images, lidar scans, and videos for urban reconstruction and urban analysis using deep learning.

-

Analyzing CAD Model Collections: this problem includes classical challenges such as segmentation, instance segmentation, hole filling, model generation, through analyzing large model collections.

-

Synthetic Image and Model Generation and Detection: this problem includes generative modeling for 3D meshes, point clouds, images, and videos, such as face generation and the detection of generated models.

Example Projects

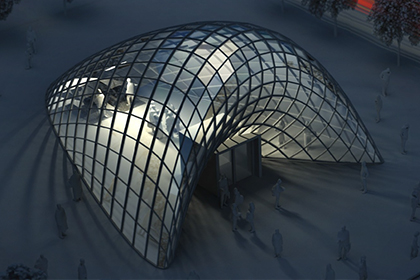

Architectural and Urban Design

This project is about the development of software tools for architects, urban planners, designers, civil engineers, or administrators to explore possible solutions to urban design problems.

For this research project, we consider multiple factors. Firstly, most practitioners in the field would like to have significant input into the design process. It is, therefore, our goal to integrate automatic computation and user interaction to leave enough control for the users to influence the design.

Secondly, successful tools need to integrate multiple types of potentially competing considerations. On the one hand, there are aesthetic considerations and user preferences, such as the smoothness of architectural surfaces or the designer's preference for straight or curvy streets.

On the other hand, there are functional considerations such as energy efficiency, shading, efficient use of space, etc. Addressing these issues, the project pursues the development of algorithms and software for the following goals.

In the past, we mainly tackled these problems with geometric and optimization techniques. In the future, we would like to combine geometric and optimization techniques with deep learning. For example, learning discrete models for streets, parcels, and floor plans using generative adversarial networks or initializing geometric optimization algorithms using deep learning.

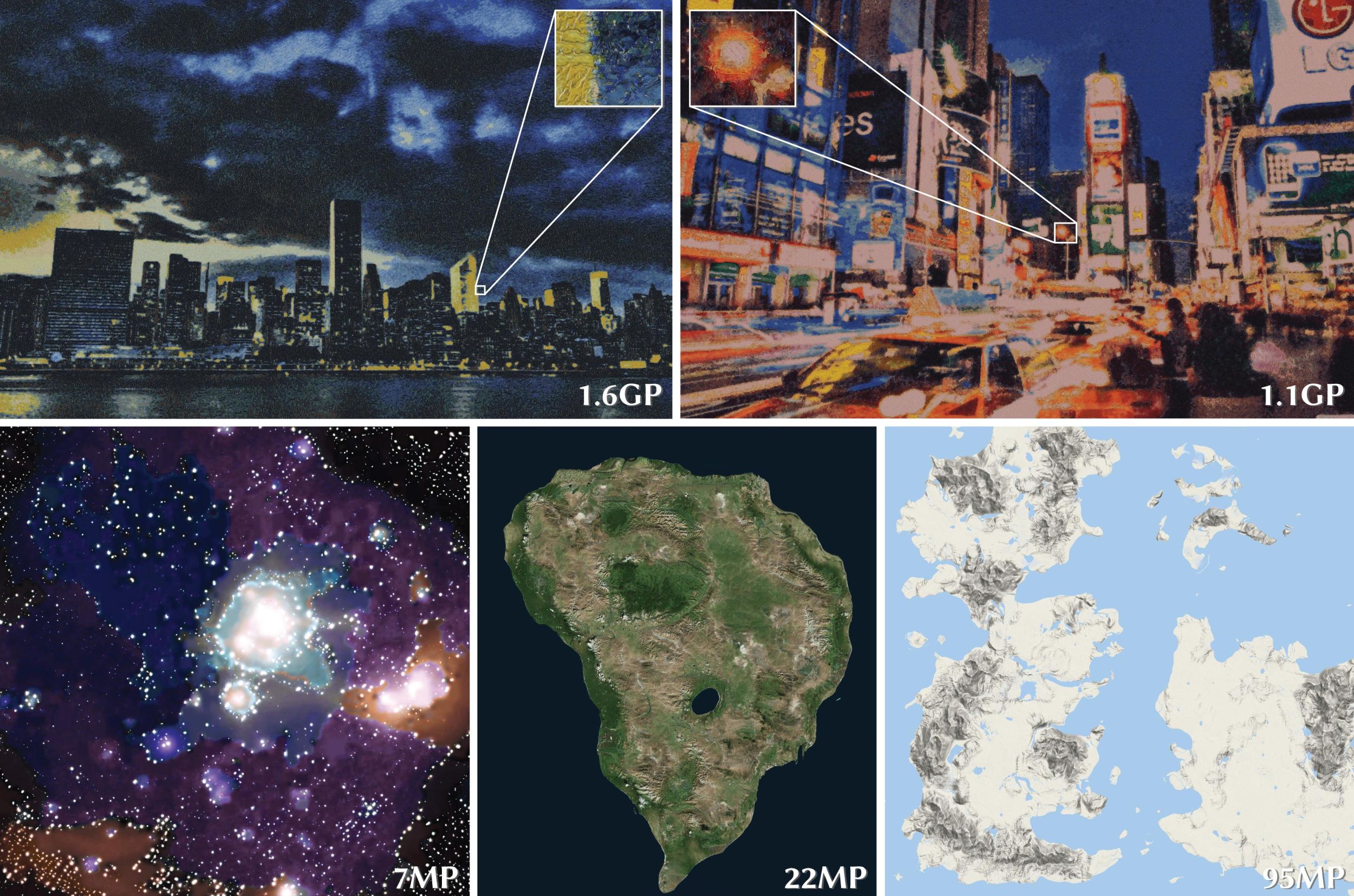

Analyzing Geo-Spatial and Remote Sensing Data

Given an urban region, e.g., denoted on a map, this project aims at analyzing remote sensing data to obtain a semantic 3D reconstruction. Challenges include segmentation, object detection, registration, depth estimation, point cloud generation, mesh generation, and model fitting.

Semantic three-dimensional urban models are useful for urban planning, architecture, and civil engineering since they can be used to obtain a good overview of the current state of an urban environment. This is also useful for visualization and for planning future changes to the environment. For example, simulations can be used to evaluate flooding, shading, heat propagation, traffic, pollution, noise, etc., and new plans can be evaluated in the context of the existing environment.

Other applications benefitting from this project are mapping on mobile devices, in cars, and on desktop computers. Addressing these issues, the project pursues a combination of machine learning and optimization techniques to large-scale urban reconstruction.

Synthetic Image and Model Generation and Detection

Generative models are powerful tools to synthesize new spatial structures. We would like to explore the use of generative models such as variational autoencoders, generative adversarial networks (GANs), and flow models to synthesize new images, videos, point clouds, and meshes.

Generative adversarial models can also be used to do semantic modifications to existing spatial structures, such as interpolation and semantic edits.