Thursday, March 02, 2023, 12:00

- 14:00

Building 2, Level 2, Rooms 2286 and 2290 (in front of Dias Café)

Contact Person

KAUST Research Open Week (KROW) is a campus-wide event with a range of activities taking place; from k

Bio-Hackathon MENA 2023

Tuesday, February 07, 2023, 08:00

- 17:00

KAUST Hotel

Contact Person

Bioinformatics experts, Don’t miss the opportunity to collaborate with researchers and field professionals in the BioHackathonMENA2023 event. BioHackathon events involve a large number of people that meet on-site to discuss ideas and implement projects in a collaborative manner during intensive coding sessions.

Associate Professor,

Bioengineering

Prof. Ricardo Henao, Associate Professor, BESE Division, KAUST

Wednesday, February 01, 2023, 12:00

- 13:00

Building 3, Level 5, Room 5220

Contact Person

We propose a structured latent ODE model that explicitly captures system input variations within its latent representation. Building on a static latent variable specification, our model learns (independent) stochastic factors of variation for each input to the system, thus separating the effects of the system inputs in the latent space. This approach provides actionable modeling through the controlled generation of time-series data for novel input combinations (or perturbations). Additionally, we propose a flexible approach for quantifying uncertainties, leveraging a quantile regression formulation.

Prof. Gabriele Berg, Environmental Biology, Graz University of Technology (Austria)

Wednesday, December 07, 2022, 11:00

- 12:00

Building 5, Room 5220

Contact Person

Understanding and managing microbiomes offers promising perspectives for all health issues. The synergistic impact of anthropogenic factors on the inter-linked plant microbiome such as biodiversity loss, pollution, ozone depletion, climate change and changing biogeochemical cycles is less understood. Recent studies indicated a general shift of the plant microbiota characterized by a decrease of evenness and specificity, and an increase of r-strategist and hypermutator prevalence as well as antimicrobial resistance. the microbiome and resistome are interconnected, and should be managed by microbiome management together.

Dr. Danesh Moradigaravand, Infectious Disease Epidemiology lab, BESE, KAUST

Monday, December 05, 2022, 12:00

- 13:00

Building 3, Level 5, Room 5209

Contact Person

In this talk, I will first present how the application of phylogenetic and phylodynamic methods to whole genome sequencing data of multidrug resistant bacterial pathogens provided an in-depth understanding of the epidemiology and evolution of these strains on epidemiological time scales. I will then discuss the characterization of the genomic repertoire of bacterial traits using a combination of machine learning, whole genome sequencing and large-scale phenotypic assays. I will then present the leverage of predictive modelling to predict bacterial features, e.g. antimicrobial resistance, growth, and horizontal gene transfer, from genomic biomarkers. I will finally discuss how large-scale phenotypic assays enabled us to identity genes underlying morphogenesis and biofilm formation.

Monday, November 28, 2022, 12:00

- 13:00

Building 2, Level 5, Room 5209

Contact Person

Biological systems are distinguished by their enormous complexity and variability. That is why mathematical modelling and computational simulation of those systems is very difficult, in particular thinking of detailed models which are based on first principles. The difficulties start with geometric modelling which needs to extract basic structures from highly complex and variable phenotypes, on the other hand also has to take the statistic variability into account.

Computational Bioscience Research Center

Monday, November 21, 2022, 09:00

- 17:00

Virtual

Contact Person

The Computational Bioscience Research Center (CBRC) at King Abdullah University of Science and Technology (KAUST) is pleased to host the International Conference on Bioinformatics 2022 (InCoB2022). This year’s conference theme will be “Accelerating innovation to meet biological challenges: The role of bioinformatics”.

Associate Professor Diego Javier Jiménez Avella

Wednesday, October 05, 2022, 11:00

- 12:00

Building 3, Level 5, Room 5209

Contact Person

Abstract

In the Anthropocene, plastic pollution is a worldwide concern that must be tackled from d

PhD Student,

Computer Science

Thursday, June 30, 2022, 08:30

- 10:30

KAUST

Contact Person

In this dissertation, we combined artificial intelligence and machine/deep learning with chemical and biological properties to develop several computational methods to solve biomedical domain problems, specifically drug repositioning, and demonstrated their efficiencies and capabilities. We developed three network-based DTI prediction methods using machine learning, graph embedding, and graph mining. These methods significantly improved prediction performance, and the best-performing method even reduces the error rate by more than 33% across all datasets compared to the best state-of-the-art method. As it is more insightful to predict continuous values that indicate how tightly the drug binds to a specific target, we conducted a comparison study of current regression-based methods that predict drug-target binding affinities (DTBA). Our methods demonstrated their efficiency and capability by achieving high prediction performance and identifying therapeutic targets for several cancer types. We further conducted a lung cancer case study of findings that support the novel predicted targets.

Prof. Takashi Gojobori, Computational Bioscience Research Center, KAUST

Tuesday, May 24, 2022, 12:00

- 14:00

Building 19, Hall 3

Contact Person

The Computational Bioscience Research Center (CBRC) will be holding a student poster competition as part of its yearly conference. Core Labs booths will also be featured in this session.

Prof. Takashi Gojobori, Computational Bioscience Research Center, KAUST

Monday, May 23, 2022, 08:00

- 16:30

Building 19, Hall 1

Contact Person

The Computational Bioscience Research Center (CBRC) is pleased to invite the KAUST community to the KAUST Research Conference on Advances in Metagenomics and its Applications.

Michal Mankowski , Assistant Professor, Erasmus University Rotterdam; Elisa Laiolo, PhD Student, Red Sea Research Center

Tuesday, April 26, 2022, 13:00

- 14:00

KAUST

Contact Person

Elisa Laiolo: In her talk, Elisa will provide insights in to the KAUST Metagenomic Analyses Platform (KMAP), which is the first catalogue of the global ocean genome and its partitioning among taxonomical and functional groups, providing insights in its high diversity and functional variety. Michal Mankowski: In his talk, Michal will explain the techniques of using simulation with optimization, in order to design boundaries-free CAS that aims to maintain the prioritization of organ transplant for pediatric, different blood types, and status 1 candidates while minimizing the overall deaths.

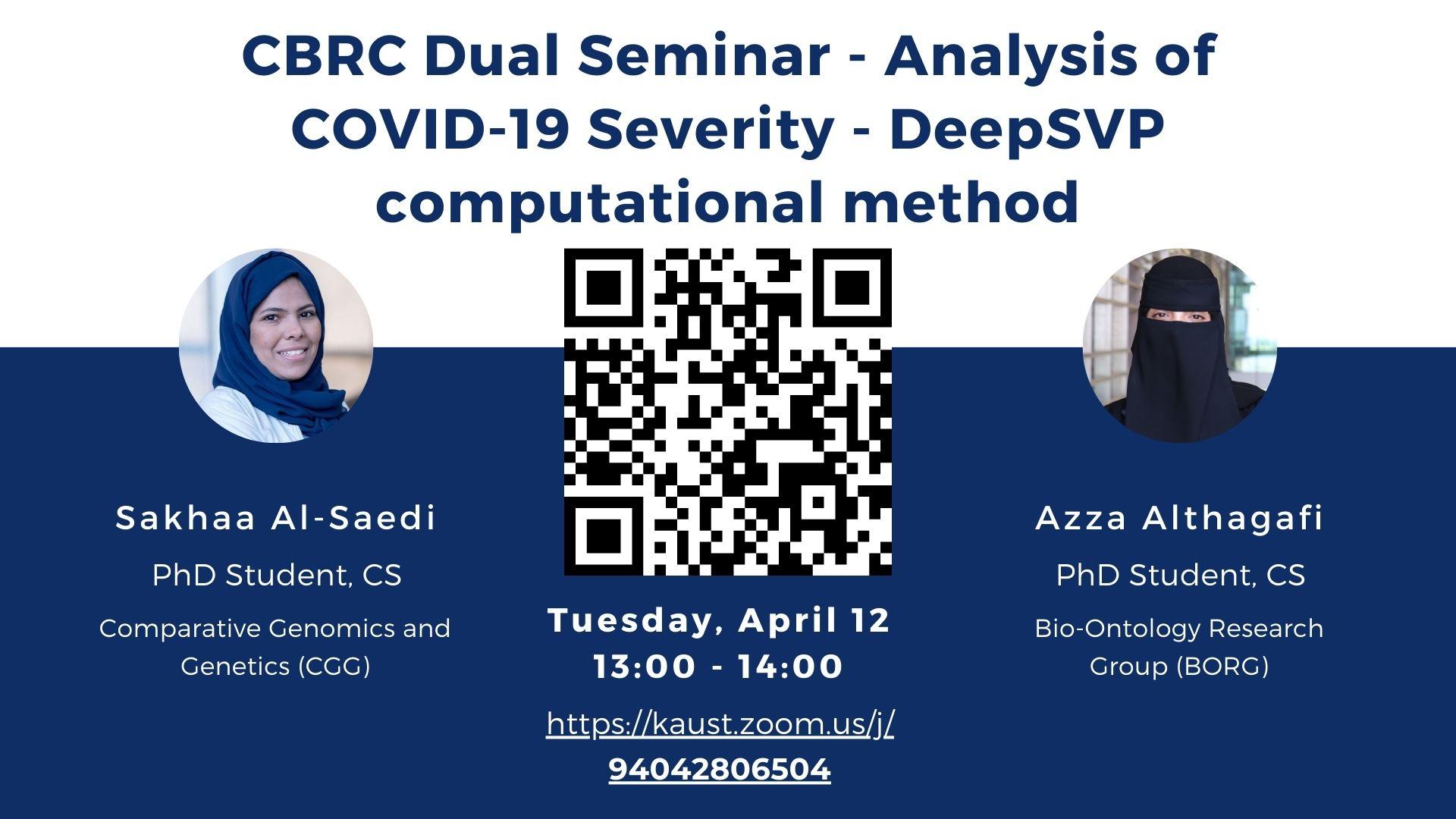

Sakhaa Al-Saedi, PhD Student; Azza Althagafi, PhD Student

Tuesday, April 12, 2022, 13:00

- 14:00

KAUST

Contact Person

Sakhaa Al-Saedi: We conduct a systematic genetic analysis of risk variants related to increasing the severity of COVID-19. It leads to a better understanding of its genetic basis and identifies the host genes to be targeted to tackle the COVID-19 pandemic and reduce its death toll. Azza Althagafi: We developed DeepSVP, a computational method to prioritize structural variants involved in genetic diseases by combining genomic and gene functions information. DeepSVP significantly improves the success rate of finding causative variants in several benchmarks and can identify novel pathogenic structural variants in consanguineous families.

Principal Research Scientist,

Comparative Genomics and Genetics

Monday, April 11, 2022, 11:00

- 12:00

KAUST

Contact Person

Dr. Katsuhiko Mineta will give a talk on "Population genomics of indigenous inhabitants in Arabian Peninsula". This talk will highlight the research conducted in areas of population genomic profiling of 957 unrelated individuals who self-identify with 28 large tribes in Saudi Arabia. The result of this research disclose a granular map of population structure in Arabia and what implications this will have for future genetic studies into diseases.

PhD Student,

Computer Science

Wednesday, March 30, 2022, 17:30

- 19:30

KAUST

Contact Person

Multi-label learning addresses the problem that one instance can be associated with multiple labels simultaneously. More or less, these labels are usually dependent on each other in different ways. Understanding and exploiting the Label Dependency (LD) is well-accepted as the key to build high-performance multi-label classifiers, i.e., classifiers having abilities including but not limited to generalizing well on clean data and being robust under evasion attack.

PhD Student,

Computer Science

Wednesday, March 30, 2022, 14:00

- 15:00

KAUST

Contact Person

Knowing metastasis is the primary cause of cancer-related deaths incentivized research to unravel the complex cellular processes that drive the metastasis. Advancement in technology and specifically the advent of high-throughput sequencing provides knowledge of such processes. This knowledge led to the development of therapeutic and clinical applications. In this regard, predicting metastasis onset has also been explored using artificial intelligence (AI) approaches that are machine learning (ML), and more recently, deep learning (DL).

PhD Student,

Computer Science

Wednesday, March 30, 2022, 11:00

- 13:00

Building 5, Level 2, Room 5209

Contact Person

Abstract

Ontologies are a formalization of a particular domain through a collection of ax-

Juexiao Zhou, MS Student and Manola Moretti, Research Scientist

Tuesday, March 29, 2022, 14:00

- 15:00

Building 9, Level 2, Room 2322

Contact Person

Talk 1: PPML-Omics: a Privacy-Preserving federated Machine Learning system protects patients’ privacy from omic data. Talk 2: Peptide biopolymers in medical and environmental applications: an AFM and Raman spectroscopy perspective

PhD Student,

Computer Science

Tuesday, March 29, 2022, 14:00

- 15:00

Building 2, Level 3, Room 3270

Contact Person

Abstract

Many ontologies, in particular in the biomedical domain, are based on the Description Log

Tuesday, March 29, 2022, 10:30

- 12:30

Building 3, Level 5, Conference Room 5209

Contact Person

Abstract:

Unexpected high mutations detected in new emerging variants of concern (VOCs) of

PhD Student,

Computer Science

Monday, March 28, 2022, 14:30

- 15:30

Building 3, Level 5, Room 5209

Contact Person

Abstract

Genome-wide association studies(GWAS) assessed the effect of common variants on human dis

Thursday, March 03, 2022, 12:00

- 13:00

Building 9, Level 2, Room 2325

Dynamic programming is an efficient technique to solve optimization problems. It is based on decomposing the initial problem into simpler ones and solving these sub-problems beginning from the simplest ones. A conventional dynamic programming algorithm returns an optimal object from a given set of objects. We developed extensions of dynamic programming which allow us (i) to describe the set of objects under consideration, (ii) to perform a multi-stage optimization of objects relative to different criteria, (iii) to count the number of optimal objects, (iv) to find the set of Pareto optimal points for the bi-criteria optimization problem, and (v) to study the relationships between two criteria. The considered applications include optimization of decision trees and decision rule systems as algorithms for problem-solving, as ways for knowledge representation, and as classifiers, optimization of element partition trees for rectangular meshes which are used in finite element methods for solving PDEs, and multi-stage optimization for such classic combinatorial optimization problems as matrix chain multiplication, binary search trees, global sequence alignment, and shortest paths.

Professor Charlotte Hauser, Laboratory for Nanomedicine

Tuesday, March 01, 2022, 13:45

- 14:00

B19, H1

Abstract

Coral reef degradation has been a major threat to our marine ecosystem.

Professor Paul Matsudaira, National University of Singapore

Tuesday, February 15, 2022, 16:30

- 17:30

KAUST

Abstract

In the mammalian intestine, stem cells (ISCs) located in basal crypts, replicate and tran

Dr. Hector Martinez, CTO at BICO

Tuesday, February 08, 2022, 16:30

- 17:30

KAUST

About the speaker

From Mexico to the US to Sweden, Dr.