Events

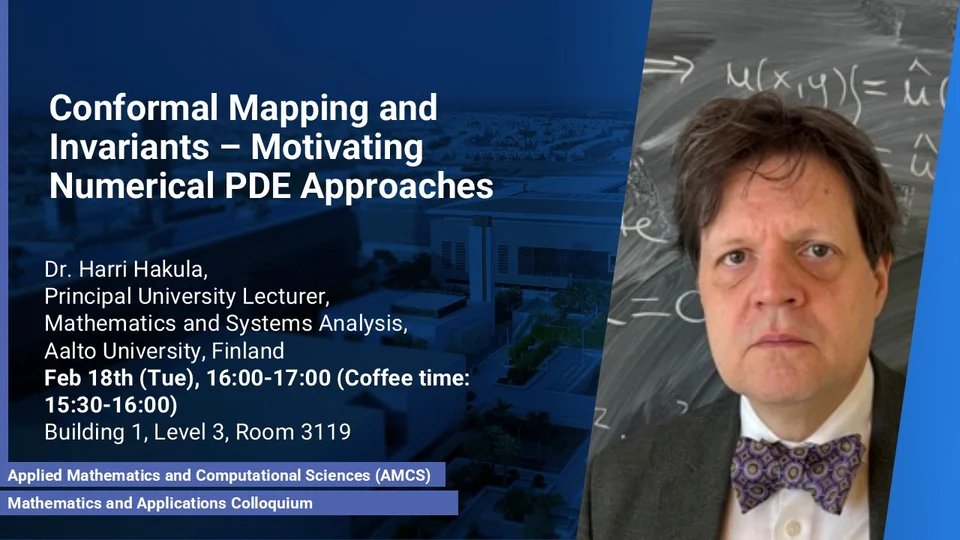

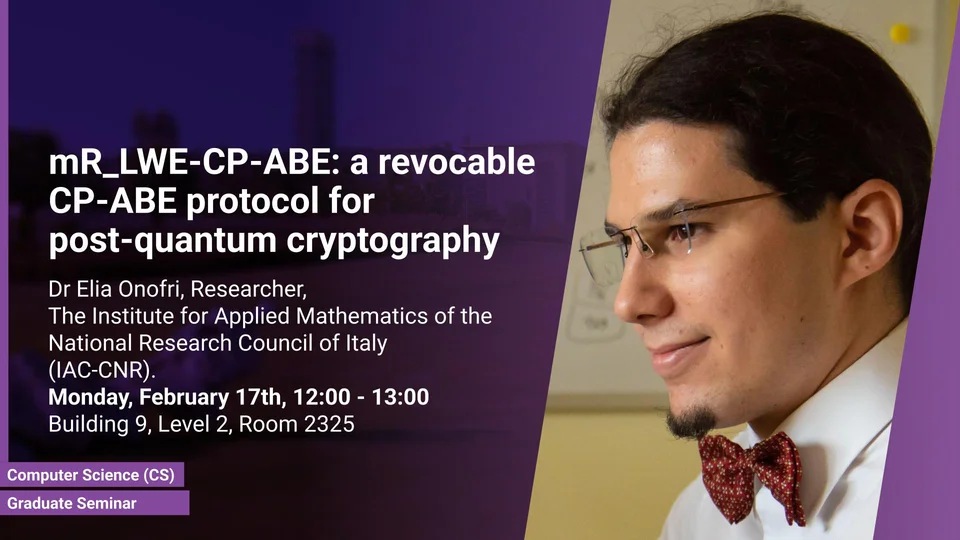

Feb 16 - Feb 22, 2025

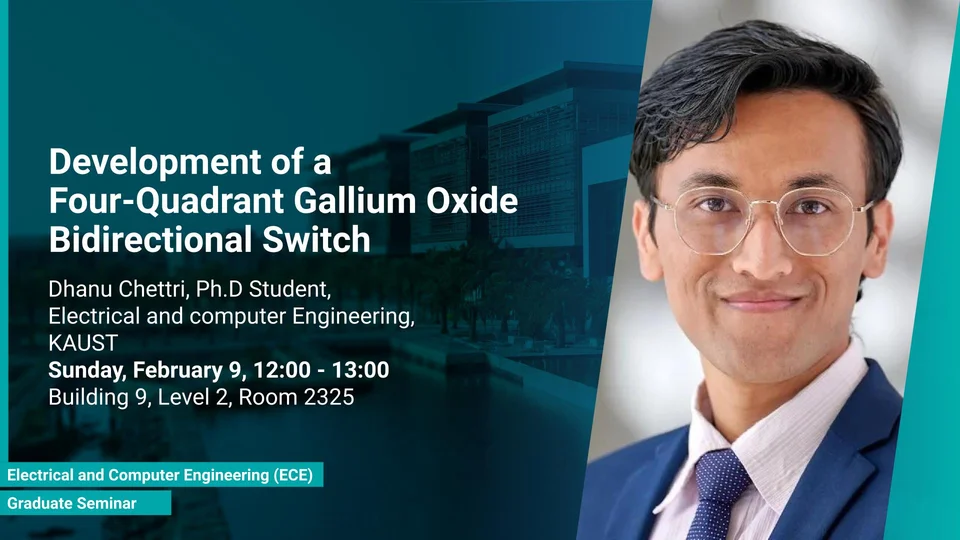

Feb 9 - Feb 15, 2025

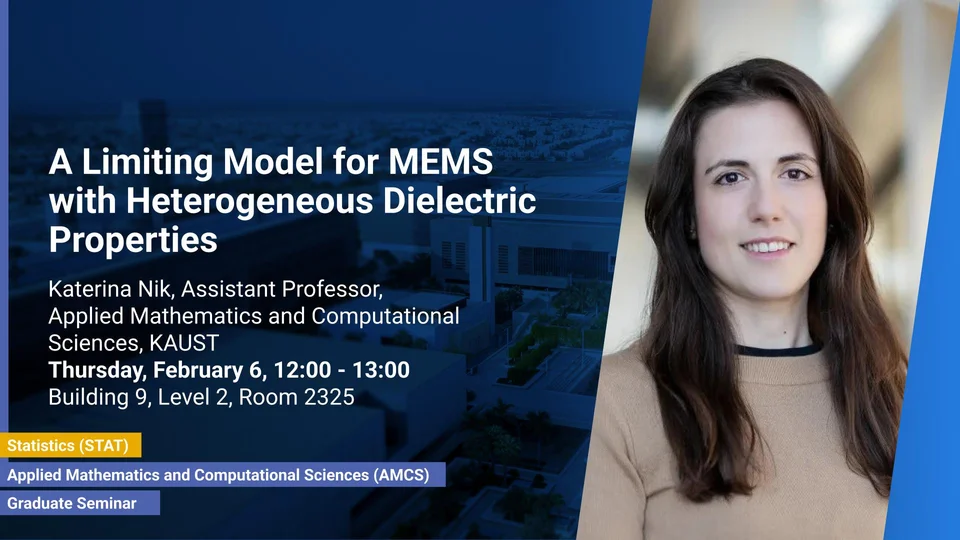

Feb 2 - Feb 8, 2025

IEEE Antennas and Propagation Society Distinguished Lecturer Workshop

-

Auditorium between Buildings 2 and 3

Workshops

Jan 26 - Feb 1, 2025

NumPDE Workshop: Numerical Analysis of PDEs

-

Sunday 26/01 (morning, 8.45-13.30) - Auditorium between Building 2 and Building 3; Sunday 26/01 (afternoon, from 13.45) - Building 9, Room 2322 (Lecture Hall); Monday 27/01 and Tuesday 28/01 - Auditorium between Building 4 and Building 5